Modeling the Peer Review Process: a Post-Publication Peer Review!

This post is so meta. So, peer review is one of the core concepts in science upon which the apparatus of scientific pursuit and dissemination is hung. Peer review means that science is not done in a vacuum. When I do some science and then write it up to share with the world, other scientists, ideally those highly likely to be able to understand what I did and how well I did it, read my paper, and make corrections, assessments, recommendations, criticisms, etc.. Then, an editor, who is also a scientist, will decide whether to publish the paper or not based on that critique and how I respond to it. Peer review is also conducted of grants. Before a grant is funded, it will be scored by scientists in the field who assess the likelihood that the grant will be successful based on the merit of the science and the track record of the investigative team.

There are lots of problems with the peer review process. It’s long. Reviewers are volunteers, so may not put their best efforts into the process. Finding appropriate reviewers can be difficult for cutting-edge science or interdisciplinary science. Reviewers are not machines, and may have unknown biases and personal grudges. And the entire process can feed into the glamor-magazine disaster that science has been beholden to for decades now, where the quality of a j0urnal is used as a proxy for the quality of a paper, when in reality there are myriad reasons that an excellent paper might be published in a smaller, less prestigious journal. Some argue that the only true review of a paper is the post-publication review in the arena of ideas. That all science should just be published openly, and then the good papers will rise to the top of the field. I don’t buy that entirely, but I’m intrigued by it.

So, today on Infactorium, I will do a post-publication peer review of a paper (which has successfully navigated the peer review process) about using agent-based modeling to study the peer review process! It’s a review of a reviewed paper about review! Charlie Kaufman and Spike Jonze could make another bad movie about it.

This paper(*) uses agent-based modeling to examine how peer review functions. Agent-based modeling is a system of using computer generated autonomous entities called “agents” to perform tasks and interact with each other. Agents may be endowed with attributes – variables representing their unique characteristics – and rules of behavior governing how they interact with each other and their environment. Other examples of agent based models include cells in a tumor, birds in a flock, etc.. Agent-based models are especially good at modeling large numbers of individuals that together make up a population which may exhibit emergent behavior. Think ants forming a community and building an anthill.

Allesina develops a model of the peer review system in an agent-based simulation. He does this in two basic scenarios: the way we have it now, where authors pick a journal, hope to be published, and repeat this process if they are unsuccessful (including a minor variation which allows pre-review rejection, or desk rejects, to make the scenario more realistic); and a second scenario where journals bid on papers (!).

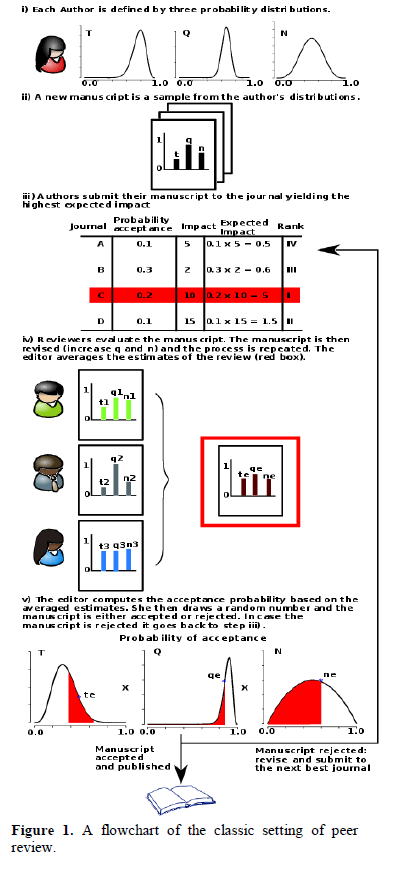

Allesina’s model treats each author as a ‘source’ for papers, and each paper is given three attributes, which are distributed according to a probability distribution, on which the paper is scored by each journal: {Topic, Quality, Novelty}. Then, a paper is represented as a sample of those distributions: (t,q,n). Under the traditional model, the one we have now, the author then submits to the journal with the highest impact based on the expected values of the (t,q,n)-tuple, and the probability of acceptance at the journal.

A pool of reviewers then make their own estimate of (t,q,n) for the paper, with the accuracy of their estimate being biased by the familiarity of the reviewer with the topic (interestingly, this allows reviewers in this model to give inaccurate reviews!). The paper is then revised, which increases q and n (but not t), yielding the revised manuscript I’ll call (t,q’,n’). Finally, the paper is accepted based on a random number call defined by the paper’s final quality and the journal’s selectivity threshold. Rejected papers are resubmitted to different journals up to five times, and then abandoned. This process is described in the paper’s figure 1.

The first variant allows the editor to reject (but not accept) a paper prior to review according to an analogous process to the peer-review, but in very little time.

The first variant allows the editor to reject (but not accept) a paper prior to review according to an analogous process to the peer-review, but in very little time.

The alternative method of peer review that the paper uses would represent a radical departure from the current way of doing things. It creates a pre-review archive of papers, like arXiv. However, to submit a paper to the simulated preprint archive, the author must review three papers already in the archive (let’s ignore for the moment how the first three papers get submitted to the archive…). Authors select their “favorite” papers (i.e., the one they are most suited to review), and review them. Once a paper receives 3 reviews, it is revised by its author, and resubmitted to the archive for re-evaluation. The paper is not explicit in its methods in describing whether the revision is seen by the same three reviewers, or must undergo the random-chance revision process again. I assume the former.

Once the twice-reviewed manuscript is done, it is sent to a pool of aptly named “Ripe Manuscripts”. Journal editors then review all manuscripts and “bid on” (offer to publish) any manuscript that satisfies their publication thresholds and survives a roll of the dice. Authors then accept the bid of the journal that has the highest impact for them, based on their own peculiar desires. It’s a really interesting model. I have strong doubts that a real-world version could be implemented, but it’s a fascinating proposal to study in simulation.

The results were very interesting as well. The same 500 authors and 50 journals were simulated for 10 years in each of the three scenarios. In the classic setting without editorial rejection (A), 67.6% of papers were eventually published somewhere. In the setting with editorial rejection (B), only 41.5% of papers were eventually published. However, in the bidding scenario (C), 97.6% of manuscripts were eventually published. Another really interesting bit is in the amount of work for reviewers. Under (A), 9.64 reviews per manuscript had to be performed. Under (B), 4.71. In (C), each manuscript received exactly three reviews (bearing in mind that re-review after revision counts as the ‘same review’.).

Authors had more publications and higher average impact of their work in the bidding setting. Average time to publication of a manuscript went from (A) 22 months to (B) 16.8 months to (C) 8.7 months. As expected, mean quality of a paper was lowest in the bidding group, because nearly everything ended up published regardless of quality.

Allesina notes how idealized this simulation is. Authors know precisely the quality of their own work and the probability of being accepted by a particular journal. Reviews are all useful. However, the simulation shows just how responsive these systems are to both minor and major perturbations. The introduction of editorial rejection drastically reduces the number of papers and time-to-publication or abandonment. The wholesale change scenario (C) makes an even larger difference, with vastly more science published with dramatically reduced review effort and time-to-publication.

Allesina’s big takeaway here is not about a particular method of peer review (a far more complex model would be needed to make real-world conclusions about the effective consequences of changes to the system and to have predictive validity, and Allesina notes that.). It is that modeling these complex systems can provide useful insights, and make quantitative evaluations of proposed changes.

If I may briefly editorialize, this paper is an excellent illustration of my reticence to wholly endorse the Open Access publishing model. I agree with the ideals behind it: more people should have more access to more science, and it should cost less and be more efficient. But the system is huge, complex, and has many semi-independent subsystems. Small changes to funding models, review process, access, etc., will likely have far-reaching consequences that we cannot know without careful study. So I endorse the open access goals of widespread dissemination of science. But one has only to look at the fiasco created by the public funding of science (another good ideal) to see that the unintended results of systemic change are not always good. I prescribe study. Funded study. By people like me. Or me.

_________________________________

(*) Allesina S, (2012) “Modeling peer review: an agent-based approach”, Ideas in Ecology and Evolution 5(2): 27-35

This is a super cool post! Thanks for covering this paper!

I, too, wonder about open access and how possible it is to implement. Like you said, there are so many complex factors. One of the largest barriers being, of course, the prestige and “importance” of publishing in high impact journals.

Check out pubpeer.com/recent for a nice example of a centralized post-publication peer review hub.

Thanks!